GPT4All is an ecosystem that’s designed to train and deploy customised large language models that run locally on consumer-grade CPUs. According to the GitHub page, “The goal is simple — be the best instruction-tuned assistant-style language model that any person or enterprise can freely use, distribute and build on.”

A GPT4All model is a file of 3GB to 8GB in size which individuals can download and integrate into the GPT4All open-source ecosystem software. Creators Nomic AI supports and maintains the software ecosystem to ensure quality and security, while also taking the lead in facilitating the accessibility for individuals or businesses to train and deploy their own large language models.

The team behind GPT4All stated in a document, “GPT4All models are artefacts produced through a process known as neural network quantisation. A multi-billion parameter Transformer Decoder usually takes 30+ GB of VRAM to execute a forward pass.”

What can GPT4All really do?

Like many existing chatbots today, GPT4All can answer questions on pretty much anything, and write emails, documents, creative stories, poems, songs and plays. The chatbot can also understand documents by summarising the contents within.

And just like OpenAI’s GPT-4 and Google Bard, GPT4All can also write code, but its coding capabilities are still being improved.

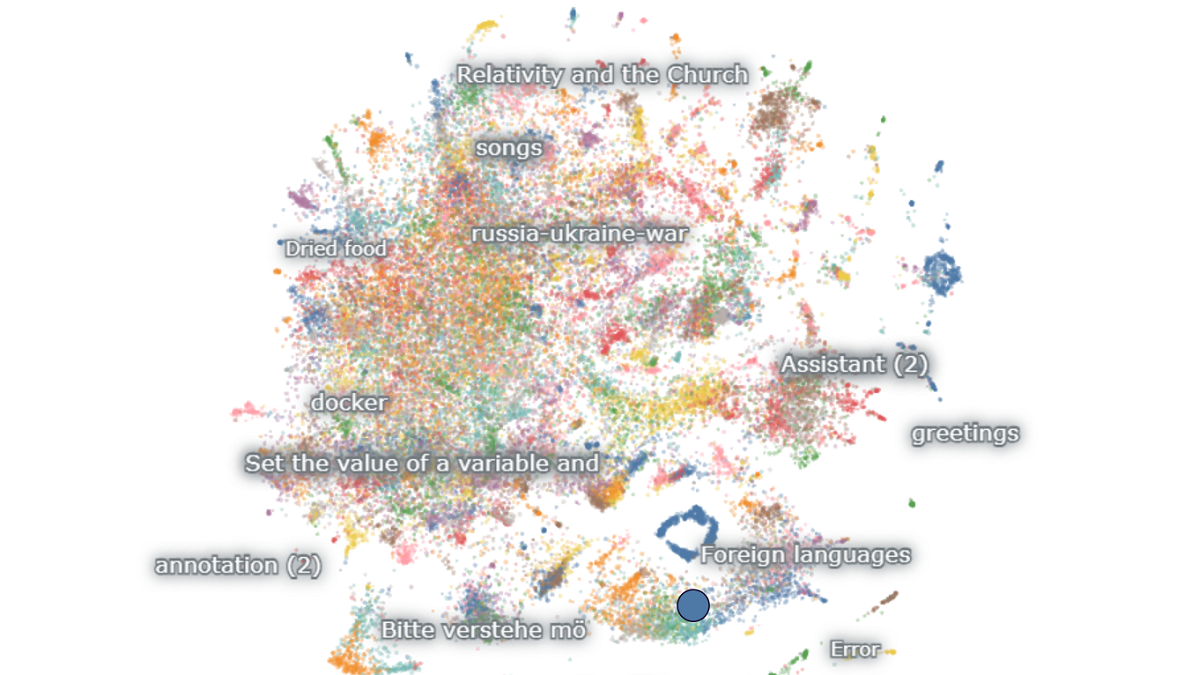

To develop a robust instruction-tuned assistant using your own data, it’s necessary to carefully curate high-quality training and instruction-tuning datasets, said Nomic AI. With that in mind, the team has also created a platform named Atlas, which is designed to simplify the process of managing and curating training data for large language models.

Isa Muhammad is a writer and video game journalist covering many aspects of entertainment media including the film industry. He's steadily writing his way to the sharp end of journalism and enjoys staying informed. If he's not reading, playing video games or catching up on his favourite TV series, then he's probably writing about them.