Tech giant Apple has released a new and updated tool that developers can use in building compatible XR apps for its upcoming Vision Pro headset.

While the company’s first AR hardware is scheduled for release in early 2024, Apple is encouraging developers to start creating applications for the upcoming headset in advance.

In order to bring its headset to market on time, Apple’s announcement comes with updates regarding the release of the visionOS SDK, updated Xcode, Simulator, and Reality Composer Pro. These resources are now accessible to developers through the Vision OS developer website.

Although certain tools may be recognisable to Apple developers, it’s worth noting that tools like Simulator and Reality Composer Pro have been specifically introduced for the headset and are new additions to the developer toolkit.

Immersive testing environment

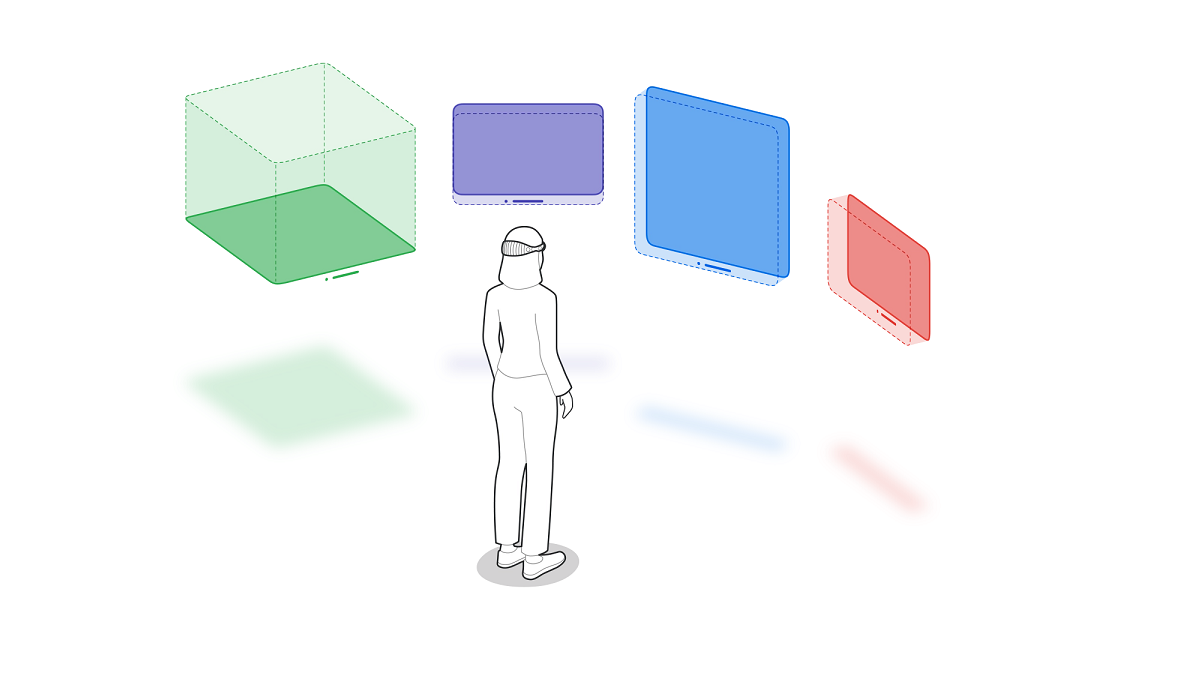

Simulator will serve as the emulator for the Vision Pro, providing developers with a means of testing their applications before physically acquiring the headset. The tool essentially functions as a software representation of the Vision Pro, enabling developers to observe how their apps will appear and function on the headset.

Reality Composer Pro, on the other hand, is designed to simplify the process of building interactive scenes for developers by incorporating 3D models, sounds and textures.

Aside from the unveiling of the visionOS SDK, the iPhone company has affirmed its commitment to establishing several ‘Developer Labs’ across the globe, where developers will have the opportunity to physically access the headset and evaluate their applications.

The beta release of the Vision Pro also comes with various environments that developers can use. “Environments let you transform the space around you, so apps can extend beyond the dimensions of your room,” Apple wrote. “Choose from a selection of beautiful landscapes, or magically replace your ceiling with a clear, open sky.”

Starting in July, Apple says developers will have the opportunity to submit applications to receive Apple Vision Pro development kits.

Isa Muhammad is a writer and video game journalist covering many aspects of entertainment media including the film industry. He's steadily writing his way to the sharp end of journalism and enjoys staying informed. If he's not reading, playing video games or catching up on his favourite TV series, then he's probably writing about them.