A new study out of Binghamton University has seen improvement in robot and human relations by utilising augmented reality.

The relationship between humans and robots has long been one that inspires the human mind. Be it stories of a coexistence where life is made simpler, or a robot uprising where machine outwits man. Theatrics aside, the use of robots, especially in the workplace, is becoming more prevalent.

Seeing the future potential for this workplace relationship is assistant professor Shiqi Zhang, a computer science faculty member at Binghamton University in New York. Zhang, along with co-authors Jack Albertson and Kishan Chandan, presented a paper at the Conference on Robot Learning. This paper explored future avenues for AR and human relations, along with any issues that need to be ironed out.

Augmented collaboration

Unlike VR, augmented reality melds both the real and virtual worlds together. This allows a user to have a real-world view, with digital additions added as an overlay. This can be as simple as text prompts or a completely different view of what is in front of the user.

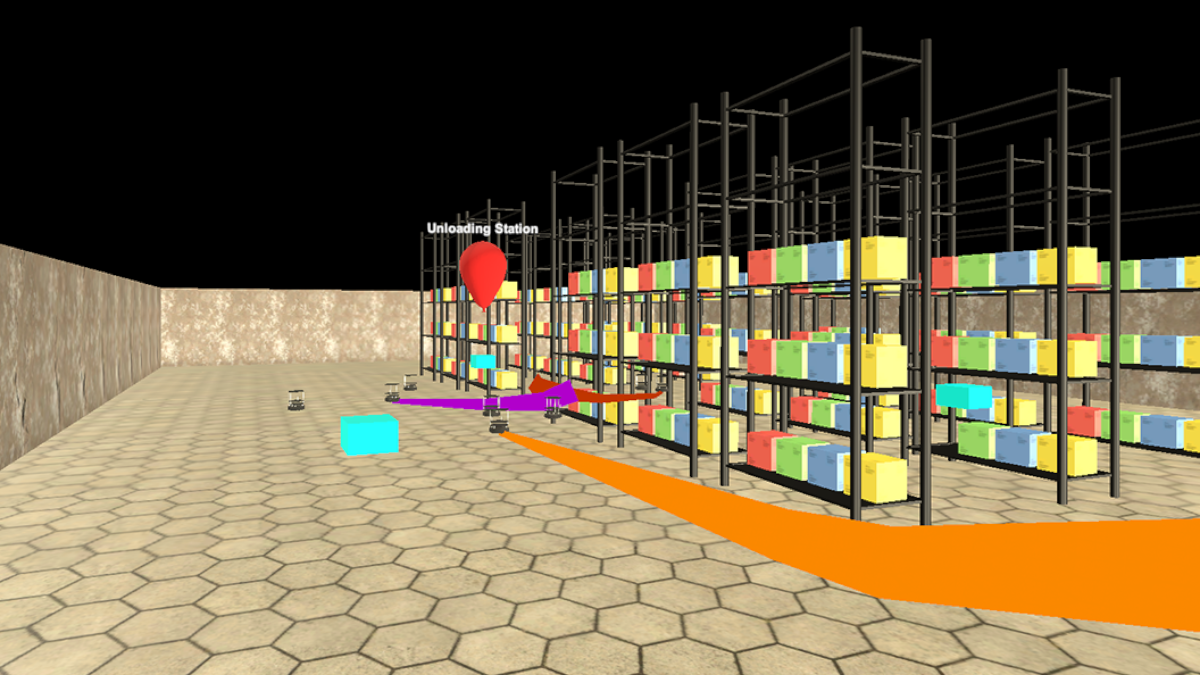

As robots are increasingly being used in the workplace this study chose to focus on the human-robot collaborative effort. The study used both virtual and small real-world simulations to gather research data. In the study, three robots were placed in a makeshift warehouse scenario and made to pick up a box and deliver it to a drop-off point. While the robot does the heavy lifting, the human can use AR to track the movement of the robot and see when it has made it to its designated drop-off point.

One of the challenges in the research was figuring out what information the human needs to know. Too little information could see humans losing track of their robot co-workers and possible accidents taking place. However, too much information could be overwhelming and make the process far too complex. The data coming through to the human also needs to be adaptive from moment to moment, so a real-time representation is being provided.

Potential for the future

Other research studies which focus on AR-based human-robot collaboration have used a manually defined visualisation strategy. This means that the user decides what information is shown. Zhang and his students instead opted for a machine-learning program that imitates a human expert.

Zhang commented that “The expert uses a simulation platform. And we let the robots and the human avatar work on a collaborative delivery task in a warehouse environment. The expert will pause and say, ‘In this situation, the AR should visualise this and this,’ then the simulation continues. Through thousands of demonstrations like this, the imitation learning algorithm works out a policy and in time can dynamically design what’s useful to display.”

This means that key information such as where each robot is will be displayed, so as to avoid any collisions. Information about successful drop-off, inventory, or necessary repairs will also be presented to the human when necessary.

Zhang’s research is ongoing, with intentions to take the study into a real warehouse situation rather than smaller simulations. By researching on a larger scale, accurate real-world data will be gathered and any safety concerns can be assessed. This is in the hopes of wider implementation in the future.

We already see robots helping in the workplace with the likes of car manufacturing and even within the medical field. Augmented training is becoming more popular within the workplace and we continue to see future potential for a collaborative workplace where technology and humans come together to create a potentially more productive and safer work environment.

Paige Cook is a writer with a multi-media background. She has experience covering video games and technology and also has freelance experience in video editing, graphic design, and photography. Paige is a massive fan of the movie industry and loves a good TV show, if she is not watching something interesting then she's probably playing video games or buried in a good book. Her latest addiction is virtual photography and currently spends far too much time taking pretty pictures in games rather than actually finishing them.