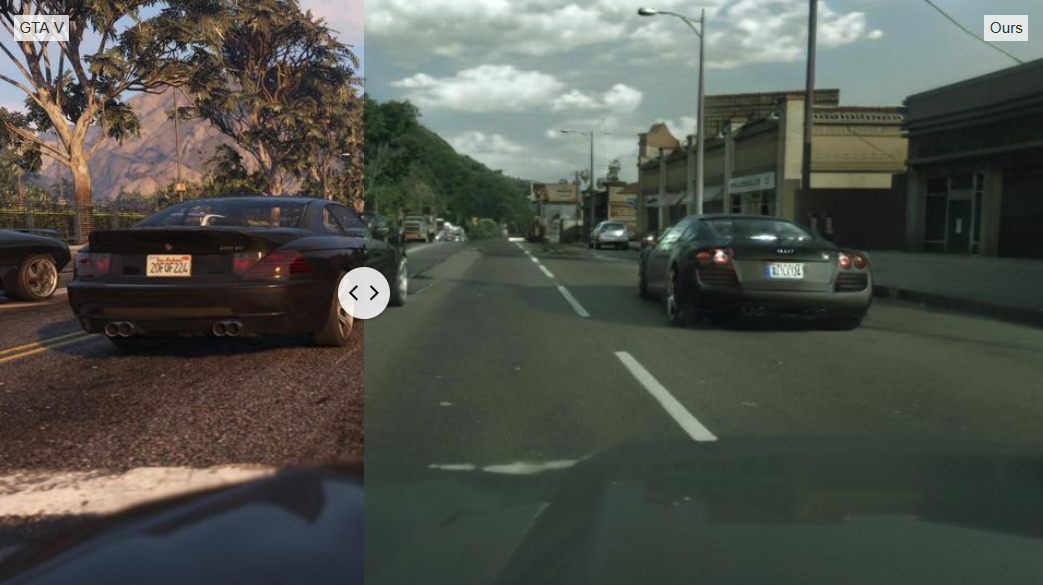

Footage was recently released by researchers at Intel, which shows, the game before and after a new Machine Learning (ML) process is applied. The before footage shows the game as it appears to players around the world, an impressively detailed urban environment – but clearly a videogame. The footage after the ML process is applied is… incredible. It’s much harder to tell that it’s a synthetic reality within a videogame. The colours are subtler, the environment greener, the clouds, the reflections, more realistic. It’s eerily lifelike.

Researchers Stephan R. Richter, Hassan Abu Alhaija, and Vladen Kolten, used a novel approach to image enhancement, based around the Cityscapes dataset, which offers thousands of stereo video sequences recorded in city environments, created primarily for the automotive and autonomous vehicle markets, as well as geometric data from GTA V itself.

The new process updates the game frame by frame, using both sources to create an experience which if not entirely lifelike, is at least a glimpse of where gaming – and other experiences – may be in the very near future.

Beyond Games

While the GTA prototype is fascinating, the real value in the work is the use of machine learning in upscaling digital content. This could have fairly major implications for older content – including film, TV and games, without having to remaster them manually. It could also point a way forward for inclusion within future interactive worlds, with a single shared ‘base’ experience being upscaled dynamically, in real-time to enable a broad audience across a range of different devices.

You can find the research paper, and more in-depth information on the Intel Labs Github repository.

Brian has been working in the games industry since the mid-1990s, when he joined the legendary studio DMA Design, as a writer on the original Grand Theft Auto. Since then he's worked with major publishers, founded his own digital agency, and the Scottish Games Network. At various times he's worked as a journalist, editor, narrative designer, lecturer, executive producer, and director.