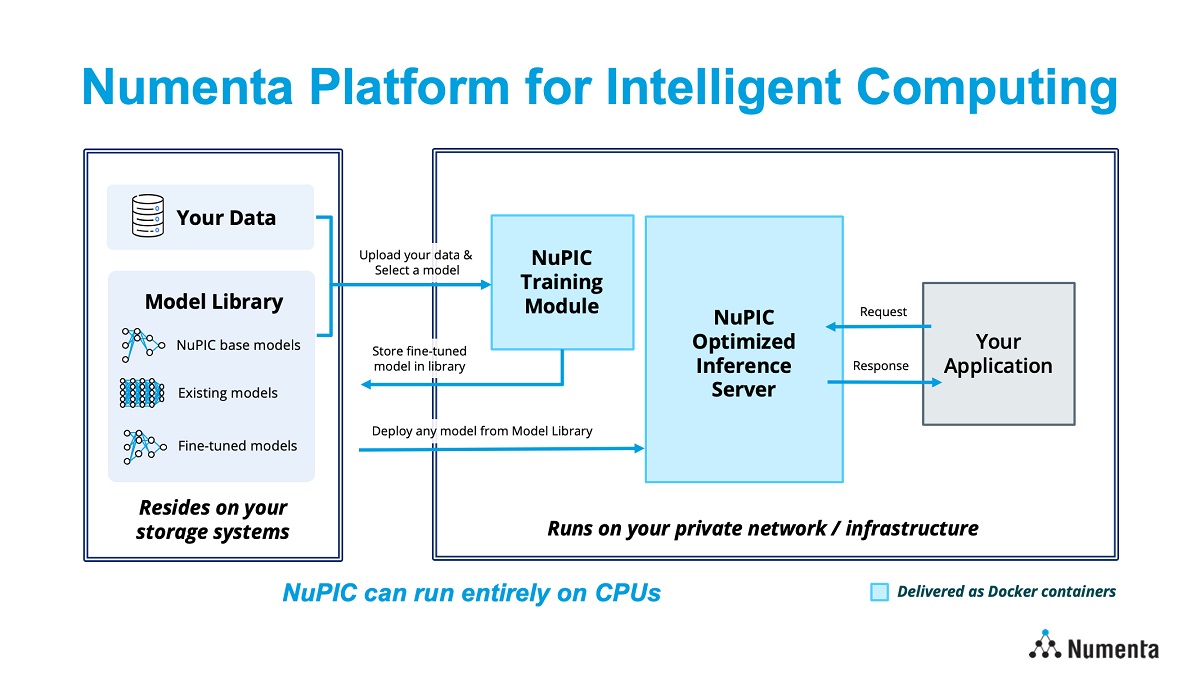

Providers of scalable, neuroscience-based AI solutions Numenta has revealed its commercial AI product the Numenta Platform for Intelligent Computing (NuPIC). Using two decades of neuroscience research, NuPIC uses Numenta’s special design, data setups and methods to use LLMs on regular CPUs.

The platform is now one of the AI systems that provide performance improvements, cost reductions, privacy, safety and management for businesses. It’s designed to allow developers or software engineers to utilise it without having in-depth knowledge of deep learning.

Subutai Ahmad, CEO of Numenta says, “We recognise that people are in a wave of AI confusion. Everyone wants to reap the benefits, but not everyone knows where to start or how to achieve the performance they need to put LLMs into production.

“With our optimised inference server, model library and training module, you can select the right model for your unique business needs, fine-tune them on your data, and run them at extremely high throughput and low latency on CPUs, significantly faster than on an Nvidia A100 GPU— all with utmost security and privacy.”

Making AI more efficient

NuPIC operates AI models on CPUs, ensuring customers enjoy steady high performance and minimal delays during inference, all without requiring complicated, costly, or difficult-to-acquire GPU setups.

The platform also runs within the customer’s infrastructure, either on-premise or via private cloud on any major cloud provider. Its flexible model library makes it easy to choose the right tool for the right job. With a range of production-ready models from BERTs to GPTs, customers can choose to optimize for accuracy or speed.

NuPIC is backed by a team of AI experts who can help provide a seamless experience in deploying LLMs in production. Numenta is currently providing access to NuPIC to a select number of customers. Individuals who are interested in early access can sign up and request a demo.

Isa Muhammad is a writer and video game journalist covering many aspects of entertainment media including the film industry. He's steadily writing his way to the sharp end of journalism and enjoys staying informed. If he's not reading, playing video games or catching up on his favourite TV series, then he's probably writing about them.