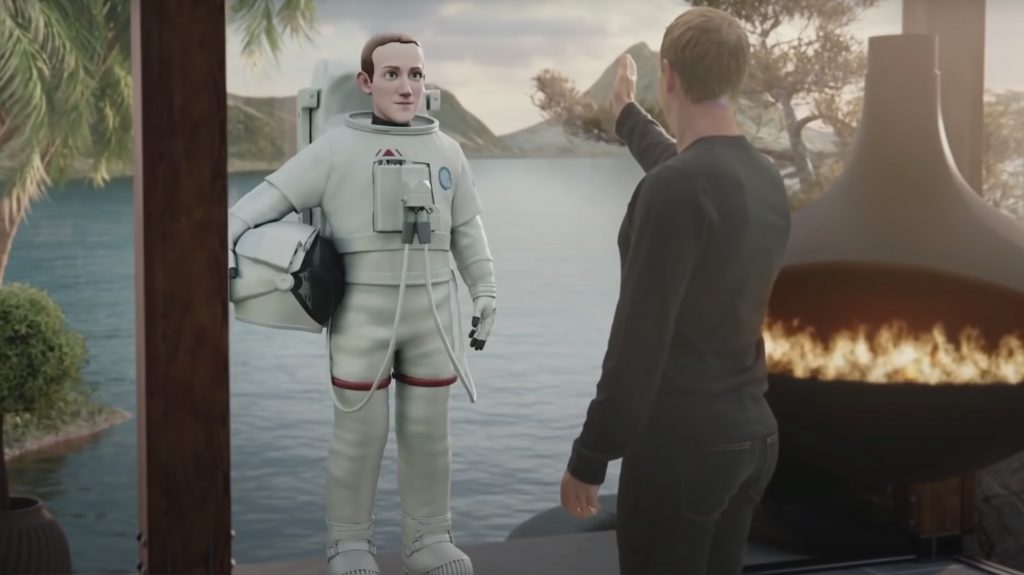

Social giant Mark Zuckerberg was recently interviewed by tech commentator Lex Fridman for his podcast and the two-hour chat revealed some interesting updates on Meta’s progress and discoveries in building what he hopes to be the definitive interpretation of the metaverse.

The topics covered were broad and sweeping and Zuckerberg, clearly at ease, opened up on subjects such as security and identity on Facebook under a surprisingly candid grilling from Fridman.

However it was his comments and thoughts about Meta’s place in the metaverse that caught our attention, ranging from teasing glimpses at Meta’s upcoming virtual creations to their experiments with – and eventual decision to give up on – giving VR users arms…

“VR is cool, you wear the goggles and you feel like you’re in a place. But you can’t really interact with it until you have hands. But what’s the right way to represent them?” he asks. “When I look down in the real world I see an arm, so it’s going to be super weird to just see a hand, right? But this turned out not to be the case.

The metaverse: Mostly ‘armless…

“There’s an issue with ‘what’s your elbow angle’? And if your elbow angle isn’t where we’re interpolating, it creates this very uncomfortable feeling that breaks the feeling of presence. But it turns out that if you just show the hands, and you don’t show the arms, it’s fine for people.”

Bingo. Problem solved. And with the thorny issue of VR limbs settled he went on to tease improvements and iterations for their next Quest and VR hardware down the line.

“When we’re designing the next version of Quest, a big focus for us is face tracking and eye tracking. So you can make eye contact. That’s something you can’t do over video conferencing. It’s amazing how far video conferencing has gotten without the ability to make eye contact.”

Apple’s VR vision

And, in what appears to be a light jab at the much rumoured goggles/glasses en route from Apple, he bemoans ‘other company’s’ lightweight efforts in the field as forgoing the kind of eye tracking that he believes is required for true immersion.

“It’s not clear to me that the other companies are going to prioritise putting these features in the hardware because there are trade offs,” he argues. “It adds weight to the device, maybe it adds some thickness. You could totally see another company taking the approach of ‘let’s just make the thinnest, lightest thing possible’. But I want to design the most human thing possible that creates the richest sense of presence.”

Interesting stuff, but perhaps the most arresting aspects arising from the chat are the types of timescales that Zuckerberg is predicting for this metaverse ‘immersion’ and the concept of the metaverse not as a place, but as simply as ‘a time’ – a time when people spend more time in that space than they do in the real world, recognising the fact that we already spend a lot of time (a majority of our time?) in 2D digital spaces such as our computer screens or phones.

When will the metaverse arrive?

“A lot of this will be possible in a few-year period to a five-year period. We don’t need everything to be solved to deliver this full sense of presence,” he explains.

“A lot of people think that the metaverse is about a place. But one definition of this is that it’s about a time – a time when immersive digital worlds become that primary way that we live our lives and spend our time.

“We already live a lot of our life in digital worlds. They’re not 3D virtual reality but I do a lot of meetings over video, or writing things over email or WhatsApp.”

“[The metaverse] will happen when we get a lot of the work things dialled in,” he continues. “When this is clearly better than Zoom for VC. When you’re doing your coding or writing in VR. It’s not that far off to imagine that. Pretty soon you’re going to have a screen that’s bigger than… [trails off] It’ll be your ideal set up, you can bring it with you. You can put it on anywhere and have your ideal workstation. I don’t think that’s more than five years off.”

What can we expect in the metaverse?

And it seems there’ll be no shortage of breakthroughs to expect when we get there.

“There are going to be new things that you couldn’t do before,” he suggests. “And those are going to be these amazing experiences. Like teleporting to any place. Whether that’s a real place or something that somebody made. When you’re coding you’re going to be building a world while you’re actually in it. You can say ‘put some trees over there’ or ‘put some water bottles on the picnic blanket’ and it’ll do it. There are going to be new paradigms for coding.”

It’s clear that Zuckerberg sees an enterprise market for the kind of VR hardware and workplace systems Meta are already building.

“You’ll spend most of your computing time in [VR and the metaverse] when it does the things that you currently use computing for somewhat better. Maybe it’s a 5% improvement on your coding productivity? Maybe it’s not a completely new thing? If I could improve the productivity of everyone at Meta by 5% I’d buy those devices for everyone.”

But isn’t all this going to be a bit much for a mass market to swallow? Zuckerberg thinks not, casually proposing concepts such as a thriving market in digital clothing.

Digital clothing and fashion in the metaverse

“Let’s say I buy a digital shirt for my photorealistic avatar,” he throws out. “Which at the time where we’re spending a lot of time in the metaverse – doing a lot of our work meetings in the metaverse – I would imagine that the economy around digital clothing will be as big [as real clothing]. Why wouldn’t I spend almost as much investing in my appearance or expression for my photorealistic avatar for meetings as I would for whatever I’m going to wear for my video chat?” he asks. “I think there’s going to be a huge aspect of people doing creative commerce here. I think there is going to be a big market around people designing digital clothing.”

It appears that Meta’s metaverse future is not only laden with possibility and ingenuity, but is growing increasingly real and could well be closer than you think.

Watch the full podcast video here.

Daniel Griffiths is a veteran journalist who has worked on some of the world's biggest entertainment, home and tech media brands. He's reviewed all the greats, interviewed countless big names, and reported on thousands of releases in the fields of video games, music, movies, tech, gadgets, home improvement, self build, interiors, garden design and more. He’s the ex-Editor of PSM3, GamesMaster, Future Music and ex-Group Editor-in-Chief of Electronic Musician, Guitarist, Guitar World, Computer Music and more. He renovates property and writes fun things for great websites.