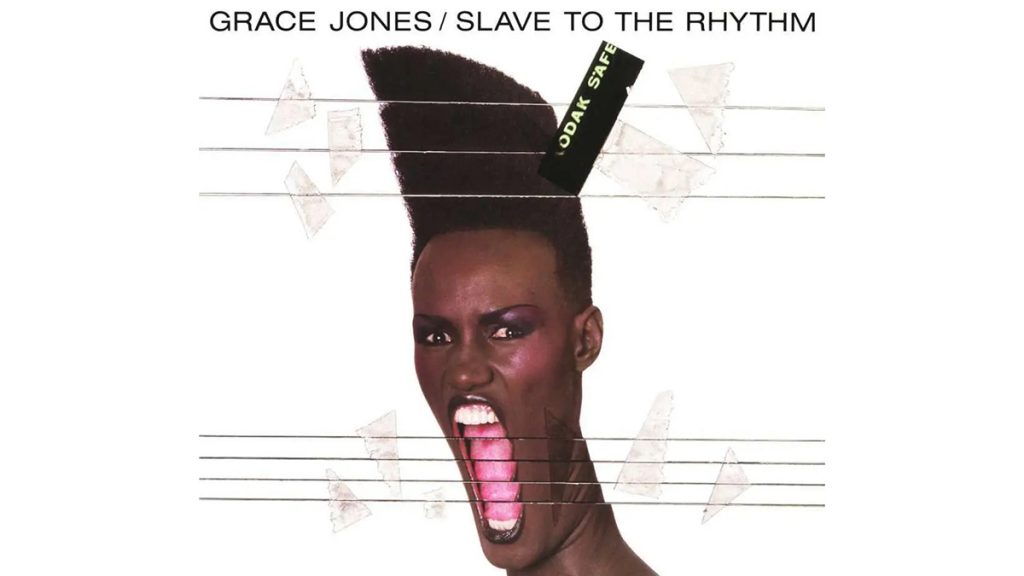

It’s 1985. I’m a silly tweenager. Max Headroom: 20 Minutes into the Future has just been on the TV and I have Slave to the Rhythm, the album by Grace Jones, on tape from Woolworths. Hooked on both, I’m just starting to think (aged 12/13) about where these worlds collide and how I can be a part of it. While my friends are establishing deep connections with A-ha and Spandau Ballet, I’m just here in my friend’s dining room playing on a Commodore 64, planning how I’m gonna tell my parents that I want to create universes for a living.

It did definitely start with Grace Jones, though. The Trevor Horn-produced album, now cited as a masterpiece for sound engineers the world over, was something of a moment in my life – at least in terms of when I started to think about the possibility that there are other stories, other media, and other ways of connecting with that which we hold dear: our identity.

The metaverse – like Slave to the Rhythm, particularly – in my opinion spans a broad sonic range. This is why in 2021, there are a variety of APIs and plug-ins which enable you to connect with your musical identity through platforms such as Discord or Fridai. Because the album’s sonic range is so vast, covering elements of funk, R&B, and deeply experimental to the point of a vocal slicing, sampling and echoing, it feels very metaversal. Each track is derivative of the main anchor point of the album, which can be a lyric, a chorus or even a tone or semitone.

However, what is important about this album particularly is its cinematic qualities. What Grace Jones presents here is biographical and is heavy (in a good way), with interviews, excerpts from books, poetry, and even digital techniques which are intimate and personal to Grace Jones either through the liner notes or from the sonic interpolations as you journey through the tracks. Blending music with fashion, fine art and film, and eternally challenging common notions of gender, femininity, race and good taste – we’re barely touching the tip of the iceberg with this metaverse exploration which has effortlessly been achieved some 36 years hence, surely?

“Trust me when I tell you that some people believe synthwave to be the soundtrack to the metaverse – no damn way!”

As an interval, it’s noteworthy to look back upon the impact that Max Headroom has had on the development of the metaverse (the signal hacking incident notwithstanding). I won’t spend this article exulting the brilliance of Max Headroom’s creators George Stone, Annabel Jankel and Rocky Morton – though I definitely should – but what I will say is that the presence of this cybernetic universe was already apparent in 1985, and that convergence point of these ideals as visual and audio approaches carefully and cartographically delivers us back to the future. To 2021 as though we were always meant to be here.

I’m hoping that HOLOPLOT has an edge on most available sound devices in the metaverse and what will be available to us should be a system that works the way that our mind truly does. Synergy, that’s the word, and using a Matrix Array we’re able to synergise with the music. So whether I’m listening to Opera Attack or the Fashion Show, HOLOPLOT is literally going to allow me the opportunity to place myself inside the action, and that takes sound to an entirely new place within the metaverse. I don’t just want to hear Opera Attack, I want to build a world inside it. And when I hear the Fashion Show, I need the tools to create the feelings I have for it. It’s about manifesting your digital self inside products.

This isn’t a new idea, either, we’ve been developing these liveable systems for years.

Using proprietary algorithms there are two things to explore: 3D Audio-Beamforming and Wave Field Synthesis. The 3D Audio-Beamforming is about the palette, creating that field to hear everything from the spoken word to even the least exciting synthwave (trust me when I tell you that some people believe synthwave to be the soundtrack to the metaverse – no damn way!). The Wave Field Synthesis makes the sound tighter, as though it’s in your head and therefore more localised and more perceptible in relation to audio objects.

Each one of the tracks on Slave to the Rhythm, and more contemporary concept albums such as Metropolis Pt. 2: Scenes from a Memory by Dream Theater, or The Black Parade by My Chemical Romance, and even Sonic Highways by the Foo Fighters (though I prefer 36 Seasons by Ghostface Killah) allows us to create with our minds what we hear to affect what we see.

So asking the question again about the metaverse and what it sounds like, it is all of these things: a story, a physical device, a software platform, a vignette or a sketch of what the meta or the overarching narrative actually is.

How does that amorphous blob of digital look in a metaverse, though? Perhaps GeoCities is a good place to start in 1998/99; each one of these villages or zones had lots of different sub-cultural communities, so if the metaverse is indeed going to be soundtracked it has to be as eclectic as these communities were but loop back into that contextual leitmotif of the “concept”. Meaning that there’s always somewhere for the end user or player to come back to.

I started writing this thinking that it would be a very crazy, out-there suggestion that Grace Jones’s Slave to the Rhythm could indeed provide a conversation point for me to talk about what the metaverse sounds like. It’s just that the nature of 1985 being so pivotal, it makes sense that in my tiny metaverse-loving birdbrain I can find that convergence point rather than a juxtaposition to guide me through thinking and developing the architecture of the metaverse.

Thinking about it as a tool to go to places and access things about ourselves or others makes sound a fairly strong basis to build the future upon. A bit like playing Catan with that build-trade-settle methodology, the foundation of the metaverse that we hear needs the connection of devices to move us through portals and realms.

That means listening becomes feeling, and sound comes back to being an amazing emotion and a muscle that needs to be stimulated and exercised.

Kelly lives and breathes everything Beyond Games as a futurist and self-described creative badass. And as an experienced game developer, she's worked on titles such as Tomb Raider, Halo 3 and Candy Crush.